Saptarshi Ghosh

AI-generated art is part of the larger realm of generative art. Generative art refers to art created autonomously by systems like computer programs or machines. In this piece, we will be taking a short trip through time, exploring the critical moments in the development of the fascinating world of machine-generated art.

Origins of Computer-Generated Art

The beginnings of art created using computers can be traced to the 1950s. However, the history of autonomously created art goes way back to ancient times. For example, Greeks in antiquity would allow wind to blow over the strings of the Aeolian harp, which would autonomously generate music. The ancient Incas used a system for collecting data and maintaining records called Quipu (meaning ‘talking knots’) which was made up of fibre strands. Aesthetically intricate and logically robust, it is widely considered an antecedent to modern computer programming languages.

Courtesy: Tate

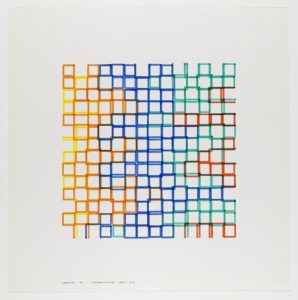

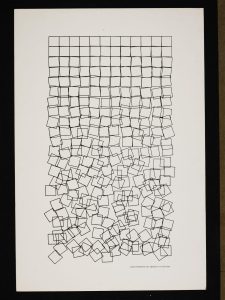

In the late 1950s, a group of engineers at Max Bense’s laboratory at the University of Stuttgart, Germany began experimenting with computers to create art. Frieder Nake and George Nees became few of the earliest practitioners of computer art, who used mainframe computers, plotters and algorithms to create interesting visual pieces. They were under the influence of Bense’s philosophy of Generative Aesthetics, which postulated that one could generate aesthetic objects using exact rules and theorems. A German philosopher and semiotician, Bense’s intention was to introduce an objective scientific approach in the realm of aesthetics. His theoretical framework was meant to be an antithesis to fascism: he believed that art can be made immune to political abuse only by divorcing it from any emotions.

Courtesy: V&A

George Nees and Frieder Nake are considered pioneers in the field of computer-generated art. Nees, a German academic, was the first to display his computer-generated artworks in a 1965 exhibition curated by Max Bense. A few months later, he displayed his works again alongside Frieder Nake, a computer scientist and mathematician, at Galerie Wendell Niedlich in Stuttgart. Much of Nees and Nake’s works feature geometric patterns plotted on paper using Chinese ink by a flatbed high-precision plotter.

Manfred Mohr, Vera Molnár, Herbert Franke were other pioneers from Europe; across the Pacific, it was Michael Noll and Béla Julesz, two scientists working at Bell Laboratories, who were the torchbearers of this form of art.

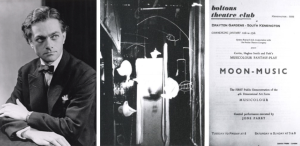

Emergence of Cybernetics and Gordon Pask’s Revolutionary MusiColour

The history of contemporary machine learning technologies can be traced to the interdisciplinary science of cybernetics, which came into being following World War II. Practised in the US and England, cybernetics attempted to understand the workings of the human brain and thereby comprehend fundamental mechanisms governing both organic and computational systems. Cyberneticians studied the workings of human cognition by examining the brain’s most basic unit – neurons – which provided them with the inspiration to design adaptive and autonomous machines.

Artificial intelligence developed in parallel to the growth of cybernetics during the 1950s. There were mainly two approaches to AI – symbolic AI and machine learning. Symbolic AI involved programming computers to become smart and intelligent; machine learning, on the other hand, sought to teach the machine to learn by itself.

Gordon Pask was an English cybernetician and psychologist, who developed a wondrous machine capable of generating a wide array of lights upon receiving sound input, in 1953. The MusiColour machine was used for concerts wherein the sound produced by musical instruments triggered the light show during performance. A reactive computer-controlled aesthetic system, the MusiColour was a predecessor to modern adaptive machine learning tools.

Harold Cohen’s AARON

The next major development came in the 1970s, when Harold Cohen, an American computer scientist, developed an algorithm called AARON that enabled a computer to generate art according to programmed rules. The works produced by AARON, however, were different from the earlier computer-generated art of Nake and Nees.

Courtesy: Computer History Museum

Most importantly, AARON could imitate the irregularity of freehand drawing. Unlike its predecessors, which would generate abstractions, it could draw specific objects as per the programmed painting style. Cohen also noticed that AARON could generate forms from his instructions that he had not imagined before. Though restricted to a single encoded style, AARON was capable of drawing an infinite number of images in that style.

Initially, AARON generated works which could be described as abstract. They were often compared to Jackson Pollock’s art. Cohen even displayed works by AARON at Documenta 6 in Kassel, Germany in 1977.

Arrival of a New Generation of Generative Artists

The field of generative art found a new lease of life in the early 2000s, with the advent of exciting artists like Casey Reas and Ben Fry.

Courtesy: Centre for Art, Science and Technology at MIT

Reas obtained his Master degree as part of the Aesthetics and Computation Group from the Massachusetts Institute of Technology (MIT) Media Lab in 2001. He generated both static and dynamic images with his software, using short software-based instructions. These instructions would be expressed in different media, such as natural language, computer code and digital simulations. Since 2012, Reas has incorporated broadcast images in his works, distorting them algorithmically to produce abstractions. He has exhibited his works at various exhibitions internationally, including the Ars Electronica in Austria and ZKM in Germany.

Courtesy: www.benfry.com

Fry earned both his Master and PhD from the MIT Media Lab. His doctoral dissertation, titled ‘Computational Information Design’, was influential for introducing the seven stages of visualising data. In fact, Fry’s art can be considered more as applications of various data visualisation processes than a vehicle of authentic artistic expression.

In 2001, Reas and Fry developed the programming language Processing, which assisted the visual design and electronic arts communities in learning the basics of computer programming in a visual context. Processing is still extensively used by artists, designers and educators.

Invention of GAN and the Rise of GANism

The current AI-powered image-making frenzy is a result of the invention of Generative Adversarial Networks (GANs) by Ian Goodfellow in 2014. GAN is a novel approach to generative models and comprises two neural networks (algorithms) working against each other. It enabled machines to generate new images in the style of existing ones, thus leading to the recent boom in AI image-generators.

In 2015, Google released Deep Dream, a computer vision program using convolutional neural network, which could be used to generate psychedelic and surreal images. Artists, however, would not experiment with GANs before 2017.

Courtesy: The Guardian

In recent years, there has been a surge in programs using text-to-image algorithms to generate images from textual prompts. Examples include Open AI’s DALL.E (released in 2021), Google Brain’s Imogen and Parti (announced in May 2022) and Microsoft’s NUWA-Infinity. With the release of Stable Diffusion in August 2022, text-to-image generators became more accessible since it could be used on personal hardware.

Future of Endless Possibilities

The recent rise in the number of exhibitions on AI and arts is glaring evidence of the growing acceptance towards AI-generated art in the past few years.

Moreover, the field achieved another milestone when in 2018, an AI-generated artwork sold at Christie’s for a whopping $432000! It was a portrait made by the French collective Obvious, generated after training the algorithm with 15000 portraits from the 14th to 20th century. Titled Portrait de Edmond de Belamy, the work resembled the style of Francis Bacon and attracted immense attention.

Courtesy: Deezen

With talented artists like Sofia Crespo, Robbie Barrat, Helena Sarin, Harshit Agrawal and Anna Ridler working with cutting edge machine learning technologies, we can’t wait to witness what the future has in store for us!

Bibliography

- Sofian Audry, Art in the Age of Machine Learning, MIT Press, 2021.

- https://www.crysalis.art/digital-crypto-art-research/a-whirlwind-history-of-generative-art-from-molnr-to-hobbs

- http://www.visual-arts-cork.com/computer-art.htm#:~:text=Artists%20first%20began%20experimenting%20with,the%20Institute%20of%20Contemporary%20Arts.

- https://nightcafe.studio/blogs/info/what-is-the-first-ai-art-and-when-was-it-created

- https://rhythmiclight.com/timeline/gordon-pasks-musicolour-machine/

- https://news.artnet.com/art-world/artificial-intelligence-art-history-2045520/amp-page

- https://studioanf.com/a-brief-history-of-generative-art/#:~:text=It%20was%20during%20the%201970s,to%20generate%20more%20complex%20forms.

- https://www.invaluable.com/blog/generative-art/

- https://thegradient.pub/the-past-present-and-future-of-ai-art/

- https://www.v7labs.com/blog/ai-generated-art